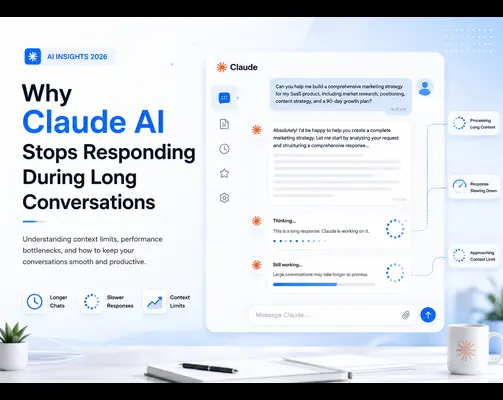

Claude AI Slowing Down Or Stopping Mid-Conversation? Learn What Causes Response Issues And Discover Simple Ways To Improve Workflow Efficiency And Reduce Token Overload.

Parix AI Team

Introduction

The world of artificial intelligence has significantly changed how businesses operate today. Tools such as Claude AI have become highly useful for completing various tasks, from automating routine work to creating high-quality content.

Claude AI is now commonly used by thousands of businesses around the world because of its efficiency, flexibility, and practical features.

Developed by Anthropic, Claude AI is one of the most effective AI assistants available today. It can be used for long-form writing, coding, research, customer service automation, and many other business tasks.

Its ability to provide consistent and helpful responses throughout a conversation makes it a preferred choice for many businesses and professionals.

However, despite its many benefits, Claude AI can sometimes frustrate users by suddenly stopping during interactions.

This issue rarely happens by chance. In most cases, Claude becomes unresponsive because of clear and recognizable factors. Once these causes are identified, the solution becomes much easier to apply.

This step-by-step guide explains why Claude AI may stop responding, what causes this issue, how to identify it, and most importantly, how to avoid it in the future.

How Claude AI Interprets Conversations

To understand why Claude AI sometimes provides poor responses or stops replying altogether, it is important to first understand how the system processes conversations.

Unlike traditional chatbots that only analyze the most recent message, Claude AI reviews the entire conversation before generating a new response.

This includes all messages sent by the user, every response generated by Claude, uploaded files, and any instructions provided during the interaction.

This approach allows Claude to maintain context, remember important details, and generate more accurate and consistent responses.

However, as the conversation becomes longer and more complex, Claude has to process a much larger amount of information.

All of this information is processed within what is known as the

context window, which represents the maximum amount of information the AI model can analyze at one time.

Once this context window approaches its limit, Claude AI may begin experiencing performance issues.

As a result, the system may slow down, generate incomplete or inaccurate responses, forget previous instructions, or freeze entirely during the conversation.

Understanding this mechanism is essential because it explains why many solutions to Claude AI response problems involve reducing conversation length, simplifying prompts, and managing overall interaction complexity.

The Context Window Explained

Understanding what a context window is and why it matters is essential when working with any large language model, including Claude AI.

You can think of the context window as a large workspace where Claude AI stores and processes information during a conversation.

At the beginning of a new conversation, this workspace is mostly empty, allowing Claude to respond quickly and efficiently.

However, as the discussion continues, more information starts accumulating within the workspace. This includes user messages, Claude’s responses, uploaded documents, instructions, edits, revisions, and additional prompts.

Before generating each new reply, Claude reviews all of this information to maintain context and provide accurate responses.

Eventually, when too much information fills the context window, Claude may struggle to process everything efficiently.

As a result, response generation may become slower, outputs may lose accuracy, and the system can sometimes freeze or stop responding entirely.

Claude AI currently offers one of the largest context windows among modern AI assistants, with some versions supporting up to 200,000 tokens.

Even with such a large capacity, very long conversations — especially those involving multiple uploaded files — can eventually reach the system’s processing limits.

Understanding how close a conversation is to these limits is an important skill, as it helps users prevent performance issues and maintain smooth interactions with Claude AI.

What Are Tokens and Why Do They Impact Performance?

Claude AI uses tokens as the basic units for processing and analyzing text input.

Instead of reading complete words in the same way humans do, Claude breaks text into smaller pieces known as tokens.

A token may represent a full word, part of a word, a number, or even a punctuation mark.

Every part of a conversation consumes tokens. This includes user prompts, Claude’s replies, uploaded documents, instructions, code snippets, and system commands.

As conversations become longer and more detailed, token usage increases rapidly.

For example, a short and focused question may use only 50 to 100 tokens, while generating a long-form article could require several thousand tokens.

Large uploaded files, such as PDFs or research documents, may consume tens of thousands of tokens at once.

When these token counts continue accumulating throughout an extended conversation, Claude AI may become overloaded and begin slowing down.

This can lead to delayed responses, incomplete outputs, reduced accuracy, or the system freezing entirely.

Understanding token consumption is not about limiting how you use Claude AI. Instead, it helps users interact with the system more strategically, allowing them to maintain faster responses, better accuracy, and overall higher performance.

Most Frequently Seen Reasons Why Claude AI Goes Unresponsive

Once you understand context windows and token limitations, it becomes much easier to identify the main reasons why Claude AI may stop responding during conversations.

Long Conversation Duration

One of the most common reasons Claude AI becomes unresponsive is excessively long conversations.

Many professionals use Claude for writing detailed reports, developing software, conducting research, or creating marketing strategies within a single conversation that may continue for hours or even days.

Although this workflow may feel convenient, it significantly increases Claude’s workload because the model must analyze an increasingly large amount of information before generating every new response.

Uploading Large Documents

Uploading documents into Claude is a common method for analyzing, summarizing, or processing large amounts of information.

However, large files such as lengthy PDFs, detailed spreadsheets, research documents, or coding files consume a significant number of tokens and greatly increase the size of the active context window.

Uploading several large documents simultaneously can place heavy pressure on the system, resulting in slower performance and lower-quality outputs.

Overloaded and Multi-Task Prompts

Another extremely common issue is creating prompts that ask Claude AI to perform too many tasks at once.

For example, requesting Claude to research a topic, write an article, optimize it for SEO, summarize it, edit it, and generate social media posts in a single prompt can overwhelm the system.

While Claude is capable of handling multiple tasks, it generally performs much better when instructions are separated into smaller and more focused steps.

Constant Repetition of Instructions

Some users repeatedly remind Claude to “be professional,” “optimize for SEO,” or “keep responses brief” throughout the conversation.

Although these reminders are usually well-intentioned, they unnecessarily increase token usage because Claude already remembers previous instructions within the active context window.

Repeating the same instructions too often can gradually reduce overall efficiency during long conversations.

Busy Servers

In some cases, Claude AI may respond slowly simply because the platform is experiencing heavy traffic during peak usage hours.

Steps to Determine Whether Your Claude Conversation Suffers From Context Overload

Recognizing context overload at an early stage makes it much easier to solve the problem before the conversation becomes completely unresponsive.

Below are some of the most common signs that indicate your Claude AI conversation may be approaching its context limit.

Slower Response Generation

If Claude begins taking noticeably longer to generate replies compared to the start of the conversation, this is often a strong indication that the context window is becoming overloaded.

Responses Are Cut Off Midway

In some situations, Claude may start generating a response but fail to complete it properly.

Responses may suddenly stop in the middle of a sentence or even halfway through a word. This often happens when the system struggles to manage the amount of information stored in the active context window.

Claude Forgets Previous Instructions

Another common sign of context overload is when Claude begins ignoring or forgetting instructions that were previously provided.

As a result, the AI may generate responses that are unrelated, inconsistent, or completely different from the original requirements.

Lower Accuracy and Relevance

When the context window becomes overloaded, Claude may struggle to remember important details from earlier parts of the conversation.

This can lead to more generic answers, factual mistakes, or responses that are less relevant to the ongoing discussion.

Overall Slow or Unresponsive Behavior

If the entire conversation feels unusually slow, delayed, or unresponsive, it is often a clear sign that the current session has become overloaded and that starting a fresh conversation may be the best solution.

Avoid Uploading Unnecessary Large Files

Large files consume a significant portion of Claude AI’s context window and can quickly reduce overall performance.

Whenever possible, upload only the specific sections or excerpts that are relevant to your current task instead of entire documents.

This helps reduce token usage and allows Claude to focus more efficiently on the information that actually matters.

Keep Conversations Focused

Mixing unrelated topics within the same conversation can increase complexity and confuse the AI model over time.

It is generally better to keep each conversation dedicated to a single project or objective.

Focused conversations help Claude maintain better context awareness, resulting in more accurate and relevant responses.

Restart Conversations Before Performance Drops Severely

If you begin noticing slower responses, incomplete outputs, or reduced accuracy, it is often a good idea to start a fresh conversation before the issue becomes more serious.

Restarting early can prevent major context overload problems and maintain a smoother workflow.

Be Patient During Peak Usage Hours

Occasionally, slow responses may simply be caused by high server traffic rather than context overload.

During peak usage periods, waiting a few moments or trying again later may help restore normal performance.

Creating a Productive Long-Term AI Process

For businesses and individuals who rely on Claude AI as a core productivity tool, building a consistent and efficient workflow is essential.

Many highly productive Claude AI users follow a set of proven practices that help them maintain efficiency and achieve better long-term results.

One common strategy is preparing prompt templates for frequently repeated tasks. This eliminates the need to recreate instructions every time a new conversation begins and helps maintain consistency across projects.

Another important practice is avoiding the storage of important outputs directly inside long conversation histories.

Instead, experienced users prefer storing content in external systems such as document editors, content management systems (CMS), cloud storage platforms, or project management tools.

In addition, productive AI users regularly review their workflows to identify which tasks are being completed effectively and which prompts may require adjustments or entirely new approaches.

Organizations and professionals who consistently apply these methods often experience major improvements in productivity, workflow efficiency, and overall satisfaction with AI-assisted processes.

At Parix.ai, we specialize in building intelligent AI-powered workflows Automation that help businesses fully benefit from advanced technologies such as Claude AI.

FAQ

Q: Why does Claude AI freeze in the middle of a conversation?

A: Claude AI usually freezes because the conversation becomes too large and complex for the system to process efficiently.

Long conversations, multiple uploaded files, and prompts containing several tasks at once can significantly increase token usage and overload the context window.

Q: How can I stop Claude AI from freezing during conversations?

A: One of the best solutions is to start a new conversation and provide a brief summary of the previous context instead of continuing an overloaded chat.

You can also improve performance by breaking large tasks into smaller steps, using focused prompts with a single objective, and avoiding unnecessary file uploads.

Q: Does Claude AI have a token or context limit?

A: Yes. Claude AI operates within a context window that limits how much information it can process during a conversation.

As token usage increases, performance may gradually decline, leading to slower responses and reduced accuracy. Some Claude AI models can support context windows of up to 200,000 tokens.

Q: What happens when Claude’s context window becomes overloaded?

A: When the context window becomes too full, Claude AI may struggle to prioritize information correctly.

Earlier instructions may lose importance, causing the AI to ignore or contradict previous requirements. Starting a fresh conversation with a concise summary often helps solve this issue.

Q: Is Claude AI slowdown caused by Anthropic server issues?

A: Occasionally, Claude AI may slow down because of high server traffic during peak usage periods.

However, in most situations, performance issues are caused by context overload rather than server-related problems.

Optimizing conversation length, reducing token usage, and improving prompt structure usually resolves these issues effectively.

Q: Can businesses effectively use Claude AI for professional work?

A: Yes, absolutely. Businesses that structure their workflows properly and use Claude AI strategically can achieve excellent results, even when working on highly complex tasks and large-scale projects.

Conclusion

In conclusion, Claude AI is one of the most advanced and versatile artificial intelligence tools available to businesses and individuals in 2026.

Its ability to handle complex and detailed tasks efficiently makes it highly valuable across a wide range of professional applications.

However, even the most advanced AI systems can only perform effectively when they are used correctly.

Issues such as freezing, lagging, and delayed responses are among the most common problems users experience with Claude AI. In most cases, these issues are directly related to the size and complexity of the conversation.

Fortunately, these problems can usually be solved with a few simple workflow improvements.

By applying the strategies discussed throughout this guide, users can work with Claude AI more efficiently and avoid wasting time on unproductive or overloaded conversations.

Businesses that integrate these best practices into their AI workflows often discover that Claude AI becomes an extremely valuable and productive part of their operations.

If your company is looking to build smarter and more efficient AI-driven workflows, our team at Parix.ai can help.

We specialize in designing and implementing intelligent automation solutions that improve productivity, streamline operations, and enhance business performance.

Contact us at Parix.ai to learn more about intelligent workflow automation and AI-powered business solutions.